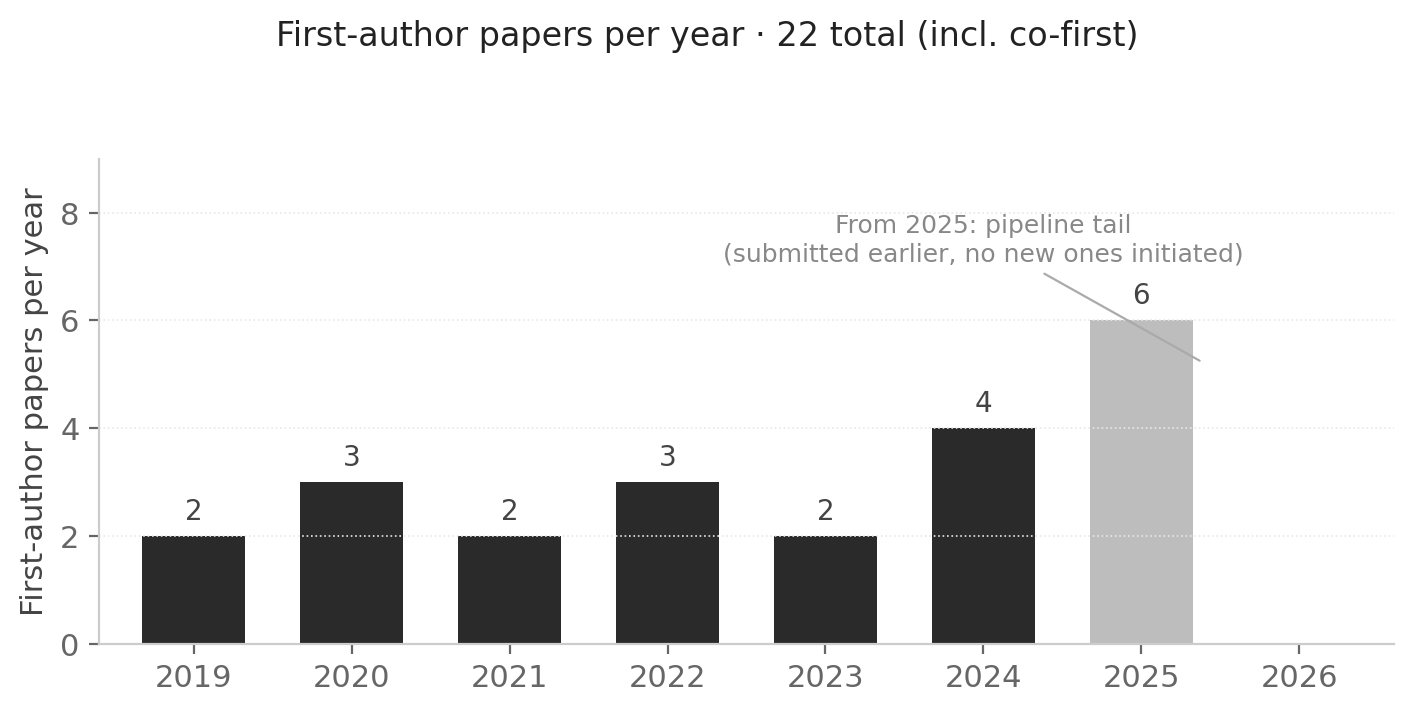

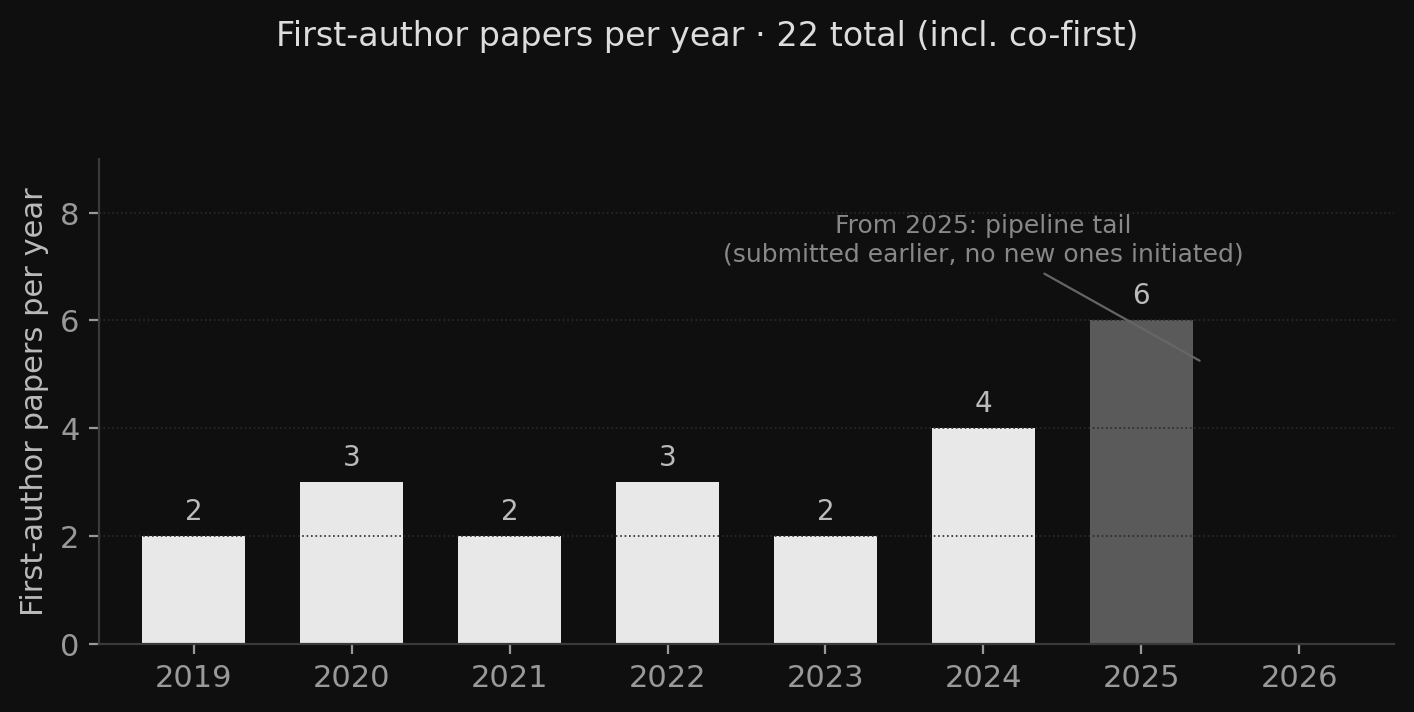

As of early 2025, I've stopped first-authoring academic papers. It's been over a year now.

1. I wrote academic papers

I did my PhD at USC under Prof. C.-C. Jay Kuo, working on representation learning. From there, through positions as a Research Fellow at NUS and a Scientist at A*STAR I²R in Singapore, before joining MiroMind, I accumulated 60+ papers in venues like ACL, EMNLP, IEEE TASLP, IEEE TNNLS, and KDD, with a few best paper / outstanding paper awards along the way.

I'm writing that paragraph not to establish credibility — I'm writing it to anchor the rest of this essay in a fact: what I'm about to say isn't because I couldn't keep writing them. It's because after writing them for a long time, I made a choice.

2. What writing those papers taught me

Looking back, the act of writing papers was itself a remarkably complete training process. What it trained wasn't "how to publish papers" — it was a few foundational skills:

- Rigor. Every number, every claim, every comparison has to be traceable back to its source. Once that habit is internalized, it transfers automatically into engineering work — when reviewing a PR, the first instinct is to ask "how was this metric measured"; when writing a design doc, the first instinct is to separate assumptions from established facts.

- Critical thinking. When you write a paper, you assume every reviewer is hunting for the holes in your logic. Over time, that "internal review" becomes a background mode, running automatically whether or not an external reviewer is in the room.

- Careful experimental design. Controlled variables, enough random seeds, confidence intervals reported, no hidden negative results. That training makes you genuinely skeptical of any ML benchmark number you read.

- Clear written expression. Compressing complex work to fit eight pages so a reviewer can follow it, while also selling its contribution — that's a scarce kind of writing skill. A lot of people in engineering can't write a clear technical paragraph because they've never been forced through this kind of pressure.

I've gone through that training. It now lives inside how I work day to day — how I design experiments on agent systems, how I write design docs, how I review my colleagues' code, how I discuss work with collaborators. The reason I don't keep first-authoring isn't that I no longer need these skills — it's that the channel for acquiring them has run its course. The 61st paper teaches me less than the 30th did, and the 30th less than the 10th. Marginal returns shrink, and at some point the cost-benefit flips.

I don't want this to read as "I've finished training and now I can leave the dojo" — that tone would be too pleased with itself. The plainer version: the training value of writing papers has, for me, mostly already been received. Continuing now would be more about maintaining a state than about growing.

3. How the shift happened

The shift wasn't sudden. It accumulated after I joined MiroMind and started working on agent systems. The shape of that work is very different from the shape of NLP research I'd done before.

Agent systems get their value from "the system runs reliably, real users actually use it, and it iterates in production." Whether a piece of work was good is judged most directly by: is it being used or not? How many queries went through? How often did it crash? Did the user come back a second time, a third time?

These are engineering metrics, not research metrics. To package them into a conference paper, you have to do several things: find a relatively clean benchmark and squeeze out a +N percentage point improvement on a slice of your work; rewrite the "why" into a contribution-clean narrative, stripping out all the "this had to be this way because another part of the codebase looks like that" real-world constraints; submit to the appropriate venue, wait for reviewers, handle the rebuttal, revise, wait again. Six months to a year and a half from drafting to publication. Each step pulls the artifact further from the work it was meant to describe.

4. Papers and my work started to lose correspondence

This is the central argument of this essay: for the work I'm doing now, the cost-benefit ratio of papers as an output has flipped.

Several specific places where the correspondence breaks down.

Smallest-paperable-unit work isn't the work I should be doing right now. The most valuable parts of an agent system are usually not standalone algorithmic tricks — they're combinations of engineering decisions: how a retrieval falls back, how a tool call times out, how state is persisted, how a prompt converges. Splitting these into "smallest publishable units" turns the whole into fragments. The engineering coherence and the cost of the decisions both vanish in the split.

The time scales don't match. The effective iteration cycle for production work is one or two weeks — find a bug Monday, ship a fix Wednesday, watch user behavior shift by Friday. The minimum cycle for a paper is six months. By the time the paper comes out, the work it describes may have been overturned or redesigned, and the finding may no longer be a finding.

Reviewer feedback has low signal value for my current work. Peer review at its best is an effective error-filtering mechanism — but only if the reviewer shares the same working context as you. The people who can give me useful feedback on what I'm doing now aren't in the review pool. They're users, colleagues, and the engineers running businesses on top of these agents. A reviewer suggesting "consider adding a control on benchmark X" no longer overlaps with the actual improvement path of the thing in my hands.

The packaging cost exceeds the output value. Rewriting engineering content as academic prose, removing all the "non-research" real-world constraints, is a frame-shifting labor. That labor has its own value (it forces you to think clearly about the abstraction layer), but for the work I'm doing now, the input-output ratio is no longer favorable.

5. An honest comparison of the two paths

Putting publishing papers vs. shipping working things side by side. This isn't to argue one is better — it's to lay out the real costs and benefits of each path.

| | Publishing papers | Shipping working things |

|---|---|---|

| Pros | · Public, archival record — accumulable, citable

· Peer review at its best can catch errors

· Direct academic recognition; clear correspondence to hiring, promotion, grant applications | · Direct, fast feedback — user-falsification is an order of magnitude faster than reviewer-falsification

· Solves real problems, not problems constructed on benchmarks

· The output is itself the deliverable; no re-packaging required |

| Cons | · Long cycle — typically 6 months to 2 years from idea to publication

· High packaging cost — real work has to be reshaped into "contribution-clean" stories

· Peer review quality is degrading — reviewer pool overload, AI-review controversies

· "Smallest paperable unit" pressure pushes toward more fragmented, more incremental work | · No external archive. Change companies, change projects, and the work dissipates; newcomers can't trace it

· Doesn't help an academic career path

· Contributions are hard to quantify — "I kept this product running for two years" is opaque to outsiders

· Weak inheritance for other researchers — code, constraints, and data are usually not public |

My current judgment is: for the kind of work I'm doing now, the trade-off on the right column fits better. But that judgment only holds for me and only for the current work — it's not a prescription for anyone else.

6. What academia is still getting right

This section isn't for political balance — it's a genuine acknowledgment.

Peer review at its best is useful. It filters out obviously wrong work, it forces authors to polish arguments to a minimum standard of clarity, and it establishes a cross-region, cross-institution baseline of common language. These functions don't have a clean replacement.

The archival property matters more. A paper from 2010 can still be cited, built upon, or corrected today — that's the infrastructure that lets knowledge accumulate across generations. The engineering work I'm doing doesn't have this property, and that's an inherent disadvantage of engineering work, not an advantage.

Theoretical computer science, statistics, mathematics, physics — in these fields, papers remain the only stable medium for knowledge. My reflections on the paper-as-medium do not apply to those fields. I'm talking about the specific subset of applied AI / systems work.

So this isn't "academia is dead" or "papers don't matter" — it's "for the work I'm doing now, the paper isn't the right medium." That's a judgment about a specific person's work fitting a specific output form, not a verdict on the system as a whole.

7. What I measure my work by now

Work has to be measured by something. I've switched to a few new things:

- Does it run in production? — The minimum bar. A piece of work that runs in a demo but breaks in production isn't finished.

- How many people are actually using it? — Usage is the most honest feedback. Daily query count, unique users, return rate — those numbers don't lie.

- What's the crash frequency and recovery time? — SRE-style metrics. A robust system that survives two years of operation is itself a valuable thing.

- If open-sourced, does it get forked, get issues filed, get PRs? — Open-source activity is a decentralized, cross-institution form of peer review.

- Am I satisfied with it myself? — The most subjective and the most important. After a piece of work is done, do I have that internal "yes, this is the best I could do at this moment" judgment?

Together these constitute a new way of measuring my work. They don't have an archival property, they don't have citation counts, but in feedback speed, signal honesty, and correspondence to actual work content, they are closer to what I'm doing now than a paper would be.

A note at the end: this is a personal turn, not a prescription for anyone else. If you're in the middle of a PhD, submitting your first paper, or your work still feels accurately described by papers — keep going. The path may still be the right one for you. I'm just writing this down for people in situations similar to mine, to say that turning isn't shameful, and isn't necessarily an exit. It might just be a change of metric.